In this post you can read about the progress of my project in 2019, after april. You also can read my previous posts because now and then I added some new examples…

I hope to post more this year.

Musical findings

In 2019 first I experimented a lot with my recorded sounds in Ableton, and later with some sounds from Spitfire Audio plugins. The sounds of these plugins are based on recordings from real instruments, orchestras, the London metro, etc. They sometimes have funny and unpredictable effects, especially when using the ‘Dynamics’ effect in combination with long sounds. I was experimenting a lot with these sounds in my program, and I wanted to find out if they would combine very well with e.g. my wind and sea sounds.

Independent of this, but at the same time, I discovered the works of the composer György Sándor Ligeti. I saw the very impressive orchestral performance of the piece Atmosphères by the Berliner Philharmoniker, conducted by Sir Simon Rattle. By using a new technique called micropolyphony, Ligeti in fact created very dense ‘soundscapes’ that are built of sounds that move at different speeds and have different tonal spacings. Here I quote how Ligeti described it himself:

Technically speaking I have always approached musical texture through part-writing. Both Atmosphères and Lontano have a dense canonic structure. But you cannot actually hear the polyphony, the canon. You hear a kind of impenetrable texture, something like a very densely woven cobweb. I have retained melodic lines in the process of composition […]. The polyphonic structure does not come through, you cannot hear it; it remains hidden in a microscopic, underwater world, to us inaudible. I call it micropolyphony (such a beautiful word!)

I was struck by the similarity between the sound effects of the Spitfire plugins in my project and the orchestral sounds of Ligeti. This discovery widened my view on a new musical area. As for me, Ligeti bridged the gap between traditional orchestral compositions and soundscapes.

Although I prefer to use my own recordings, using the plugin sounds and listening to Ligeti give me a better understanding of when sounds are musical or produce music in my opinion. Based on these findings, I begin to see great opportunities to create new music with my recordings but still more research is needed to grab the ideal sounds in nature that can be useful.

I also will concentrate on microtonal effects, by not using the discrete whole steps as in western chromatic scales, but using more subtle pitch changes.

Audio recordings

In summer, apart from the motorbikes and boom cars of a few tourists, El Hierro is very quiet: birds are silent, the sea is calm, everything is at rest. Often you only hear your own heart beat. Now, at the turn of the year the singing of the birds starts again, you hear the bees and the sea and the wind gets rougher.

I already made a number of new recordings based on my new experiences and this expedition continues in this year.

at Piedra el Rey, on the footpath to the Fuente de Mencáfete

Programming

While experimenting with my sounds, making movies, using my notes, searching through folders on my computer I was getting tired of the way my process was hindered by the implied structure of my computer. So I started searching for some tools to make life easier. The result is that I created a rather complete set of software tools that I use simultaneously to create new instruments with my recordings, make notes, describe findings etc. Here an overview.

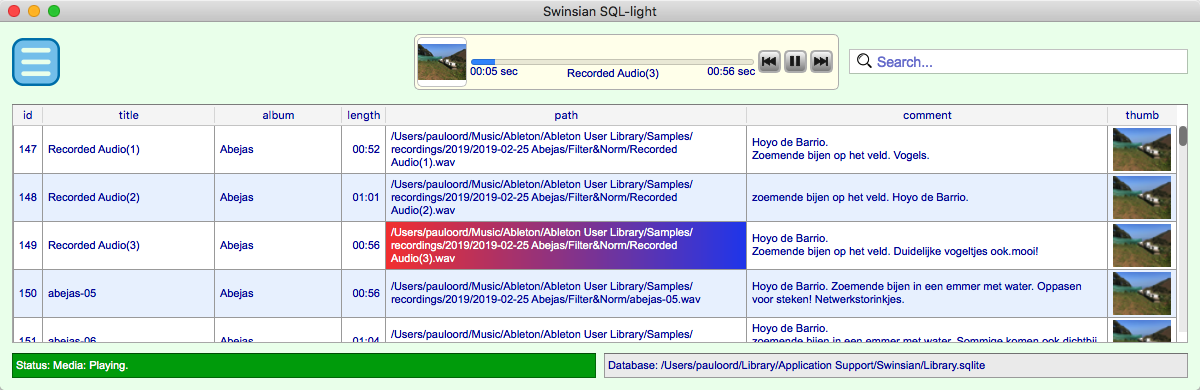

For maintenance of al my recordings, pictures etc., I used a journal, a word-document with all data and pictures. But for experimenting with the sounds this was not very useful, it required a lot of searching, switching applications and so on. So I started to look for a better tool and I came upon Swinsian, comparable with iTunes. This software is ok, but I made a new user interface for faster and more adequate searching, playing, copying and pasting, and annotating, based on the Swinsian database. It now works very smooth and I use it side by side with Ableton to build new instruments.

screenshot of the maintenance application

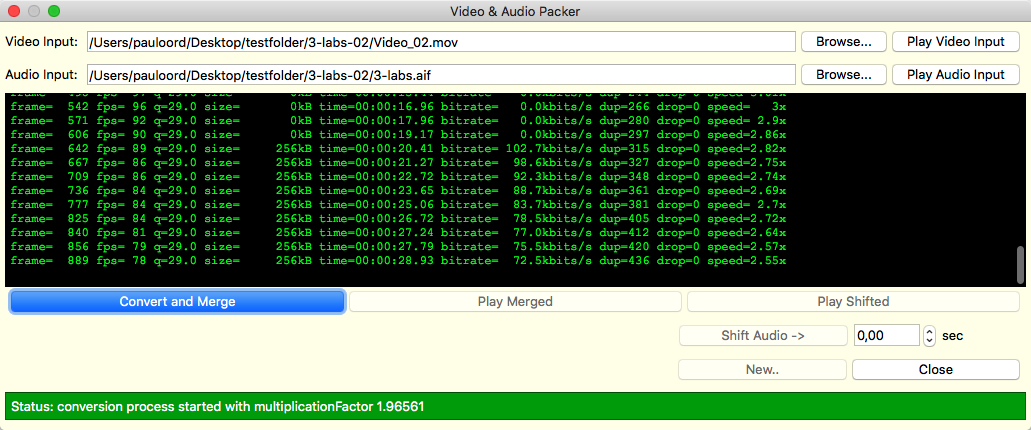

For synchronising my recorded videos from my detection application and audio from Ableton, I used some basic ffmpeg commands, build in an Applescript. This was not as flexible as I needed, especially when some shifting of the audio was necessary to adjust audio and video to fractions of a second. Synchronising audio and video is a time consuming process, so a good and responsive tool is not a luxury. Standard commercial applications are very clumsy to perform these tasks or are very expensive. My detection application generates videos with a frame rate nearly twice as much as necessary for the final result. I did this because the detection mechanism can cause some timing irregularities in the video recording, due to the limited processor capacity. By scaling back the video frame rate afterwards by adjusting it to the audio, these irregular effects smooth out and disappear. So I ‘wrapped’ the ffmpeg commands in a C++ program with functionality to adjust audio and video with a precision of 0.01 sec, and with a simple efficient user interface.

screenshot of the synchronize application

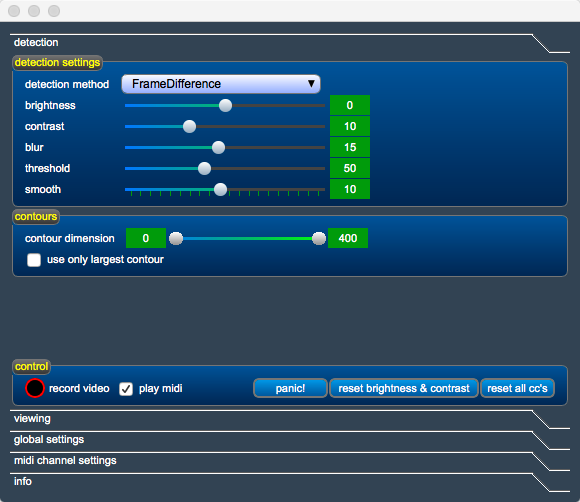

The third programming project was the complete rebuild of the user interface of my original detection program. During my work on the previous programs, I learned a lot more about the Qt/C++ programming environment. This rebuild made the program structurally less complicated and easier to maintain. My previous remarks, that it was finished were a bit premature, but now I think there is little to change or add.

|  |

| screenshots of the detection application | |

One thing that I will mention is that currently I am working on a simple client-server mechanism to use the webcam of another Mac in the same network. This is based on a robust and fast mechanism (using a UDP connection and jpg-compression). In my ‘freetime’ I will try to make this more perfect… It might open the posibility to perform on a more independent spot, apart from the main computer, running Ableton and my detection application.